Introduction

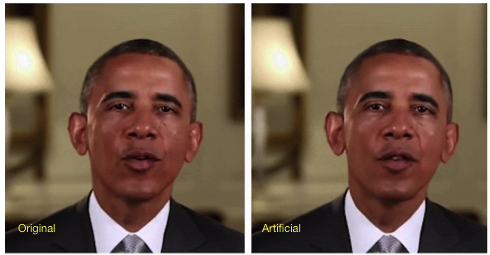

Have you ever seen a video of a politician or celebrity speaking on an issue and thought there’s no way it could really be them? Then chances are the video you watched was a deepfake. A deepfake is a doctored video that uses a form of artificial intelligence called deep learning to make images of fake events. Before getting into the good, the bad, and the ugly side of deepfakes, it’s helpful to know how they work. To create a face-swap video, you first have to run thousands of face shots of two people through an AI algorithm called an encoder. The encoder tries to recognise patterns in speeches and movement between the two people and then reduces them to their shared common features. A second AI algorithm called a decoder is taught to recover the faces from the compressed images. One decoder is trained to recover the first person’s face, and another decoder is trained to recover the second person’s face. To perform the face swap, the encoded images are fed into the “wrong” decoder. For example, a compressed image of person A’s face is fed into the decoder trained on person B. The decoder then reconstructs the face of person B with the expressions of face A. If done correctly, this will create a convincing deepfake.

The Good

We’ll start by looking at the good side of deepfakes. In late October of this year, the journalistic platform De Correspondent published a speech by Prime Minister Mark Rutte. This speech was a deepfake, intended to show what real climate leadership can look like. De Correspondent said that the Dutch public desperately needs to hear this story from the real Mark Rutte because the current message of the Dutch government is not enough. By letting ‘Klimaat Rutte’ speak the honest story, De Correspondent hoped to spark a debate. They asked for this video to be shared around but they also ensured that it was clear to everyone that the video of Rutte is not real, and they asked other media when they showed the speech to say the same thing. This example demonstrates how deepfakes can be used to encourage (or pressure) politicians to speak up on important issues in an honest way.

The Bad

We’ll turn to how deepfakes are used in the world of politics to analyse the bad side of this phenomenon. In 2019, a video of Nancy Pelosi, the speaker of the US House of Representatives, drunkenly slur through a speech was widely shared on the internet. This was in fact a digitally altered video which was quickly debunked. However, before it was debunked, millions of people had already seen it and Trump even posted the clip on Twitter. This shows just how fast disinformation can spread as it is becoming increasingly difficult to tell a real video from a deepfake. It also demonstrates how the lifelike rendering of individuals can be used to disgrace politicians. Thus, deepfakes could be a real threat to democracy as they can intensify (and be the reason for) the spread of disinformation.

The Ugly

We see the ugly side of deepfakes in their very origin: deepfakes were born in 2017 when a reddit user posted and doctored pornclips on the site. The videos swapped the faces of celebrities like Taylor Swift and Gal Gadot onto porn performers. But unfortunately, this was only the first example of how deepfake technology is being used as a weapon against women. In 2019, an AI firm called Deeptrace discovered that 96% of deepfake videos online were pornographic. What’s more, a victim’s charity in the UK found that the number of deepfake pornographic videos have increased by a third each year since 2019. These figures are deeply disturbing. Moreover, the laws in many countries around the world regarding the publication of explicit images without consent do not include deepfake images. More needs to be done to protect victims whose images have been doctored onto porn performers.

Conclusion

To sum up, deepfakes are becoming more common and more convincing. Before you finish reading, here are some tips to help you spot a deepfake. Firstly, look out for whether the person in the video is blinking or not: in many deepfakes, a person will not blink at the normal rate for humans. Secondly, are there any unusual movements from objects in the room? Finally, trust your gut: is this person behaving in a strange way and speaking out of character? I sincerely hope that in the future deepfakes will purely be used for entertainment purposes and the use of deepfakes to attack or damage anybody will be a thing of the past.

References

Information on what deepfakes are:

Information on how deepfakes are a threat to democracy:

Information on Mark Rutte deepfake:

Information on how deepfakes are weaponised against women

Mark Rutte deepfake video:

Deepfake image still of Barack Obama:

I loved to read this post. From an art-historic perspective and taking authors like Barthes into account, deep fake imagery will definitely spark a revolution in the way we perceive and affirm agency to images. Personally, I’m most interested in the’ bad’ part of deep fakes. Like you write, they can be used for propaganda purposes, while ‘ugly’ deep fakes can be used for black mailing etc. I think that our current cultural perception of photos and videos runs behind on the technological developments present right now. In some sense, personally I have peace with this development. Like you say in your conclusion, the only way we can deal with the ever-improving deep fakes, is to think wisely and rely more on first-hand experience, instead of possibly manipulated representations. This might opt for a more direct, face-to-face way of going about in life. If everything I see on Instagram is very likely to be fake, why use it at all?